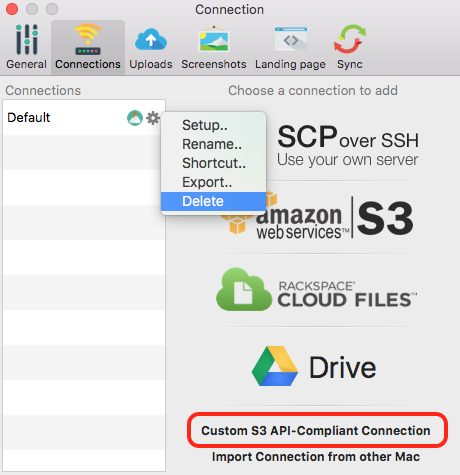

You need to generate the access key ID and secret access key in the AWS web interface for your account (IAM user). Install s3fs from online software repositories: As we’re using a fresh installation of Ubuntu, we don’t run the sudo apt-get remove fuse command to remove FUSE. If any existing FUSE is installed on your Linux system, remove that FUSE before configuring the environment and installing fuse-f3fs to avoid conflicts. A fresh installation of Ubuntu is used in this walkthrough. Let’s find out how to mount an Amazon S3 bucket to a Linux directory with Ubuntu 18.04 LTS as an example. S3FS is written on Python and you can familiarize yourself with the source code on GitHub. After mounting Amazon S3 cloud storage with S3FS to your Linux machine, you can use cp, mv, rm, and other commands in the Linux console to operate with files as you do when working with mounted local or network drives. S3FS, a special solution based on FUSE (file system in user space), was developed to mount S3 buckets to directories of Linux operating systems similarly to the way you mount CIFS or NFS share as a network drive. In this tutorial we use S3FS to mount an Amazon S3 bucket as a disk drive to a Linux directory. You can create an application that uses the same path for uploading files to Amazon S3 cloud storage and provide the same path on each computer by mounting the S3 bucket to the same directory with S3FS. You can even write your own application that can interact with S3 buckets by using the Amazon API. Enter the desired number of day in "Number of days after object creation" after when files should automatically be deleted.DISCOVER SOLUTION Mounting Amazon S3 Cloud Storage in LinuxĪWS provides an API to work with Amazon S3 buckets using third-party applications.Select the "Expire current versions of objects" action in the list.Acknowledge that this rule will apply to all items in this bucket. Give the rule a good name and select the scope "This rule applies to all objects in the bucket".In your Bucket settings, navigate to "Management" → "Lifecycle Rules".You can replicate this behaviour by using S3's lifecycle rules. In the beginning of this post I've mentioned that on my old setup, I've used a shell script to automatically delete files older than 24 hours. If you have a dedicated Amazon user for Dropshare, great! If not, best follow this documentation by Dropshare on how to create a new user with the correct permissions.Īfter DNS propagation our uploaded and shared files should be available under (or whatever domain you're using). In Dropshare, go to "Settings" → "Connections" → "+ New Connection" → "Third Party Cloud" → "AWS S3". Let's set up Dropshare to use our S3 bucket. In your DNS settings for your domain, add now a new CNAME record for that points to the Cloudfront distribution.ĭrop. For me the value looks something like this: Copy the value of "domain name" to your clipboard. This might take a while.Īfter your distribution has been successfully deployed, you should see it in the index table. Under "SSL Certificate" select "Custom SSL Certificate ()" and select our previously created SSL certificate from the list of options.Ĭlick on "Create Distribution" to deploy the distribution.Under "Distribution Settings" add our custom domain to the "Alternate Domain Names (CNAMEs)" field.Feel free to adjust them to your liking or keep them as is. The UI should auto select a bunch of settings.

Under "Origin Domain Name" select your S3 bucket.Open the Cloudfront Console and click on "Create Distribution".We have to put a Cloudfront distribution in between. We can't assign the newly created SSL certificate to our S3 bucket directly. In a matter of seconds the SSL certificate has been issued and is ready to be used. I've chosen to validate my domain through DNS. It's important that you select the us-east-1 region to make this all work.Ĭlick on "Request a Certificate" and follow the instructions. Let's create a SSL certificate through the Certificate Manager. SSL certificate and Cloudfront distribution Let's add an SSL certificate so we can serve our files under HTTPS. This CNAME record would allows us to access the uploaded files via HTTP on but not under HTTPS. You would have to add a CNAME DNS record like this. Now we could use the "Virtual Hosting" feature of S3 to use to serve our files. The files we will upload via Dropshare have to be public after all. Uncheck the "Block all public access" setting.Create a new S3 bucket in the AWS Console called.Update the mention of the domain when you apply this guide for your own use case. In this post I'm using the domain as an example.

Here's a quick step by step guide, on how I've created a new S3 bucket, Cloudfront distribution and a life cycle rule to replicate my current setup on AWS. Article provided by Stefan Zweifel - thank you!

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed